Codex vs Claude Code: pricing, autonomy, and fit (2026)

Editorial note: originally published in april of 2026

quick verdict

Codex is the better pick for most developers in 2026. Its GPT-5.3-Codex model is faster, pricing is more generous at the $20 tier, and the cloud agent adds sandboxed parallel task execution that Claude Code can't match.

If you want full local control, prefer terminal-native workflows, or rely on MCP integrations, Claude Code is the better choice because it runs entirely on your machine with no cloud sandbox required and has broader one-click MCP connector support.

choose Codex if you want more headroom on a $20/mo plan without hitting limits

visit codexchoose Claude Code if local filesystem access and privacy are non-negotiable

visit claude codepick your side

Codex (OpenAI) and Claude Code (Anthropic) are the two most capable agentic coding tools available in 2026. Both can plan, write, debug, and ship code autonomously, but they take different approaches to where your code lives, how the model reasons, and what you get at each pricing tier.

This comparison covers pricing, code quality, autonomy, editor integration, context handling, privacy, and team fit. It draws on real user feedback, published benchmarks, and hands-on testing across both tools.

feature comparison

We collect first-hand reviews from people who use these tools every day — what works, what doesn't, whether it's worth paying for. We research pricing, features, and comparisons so that feedback has real context behind it. For this comparison, we prioritised real-world usage patterns around pricing limits, autonomy workflows, and privacy requirements, as these are the most common decision factors when choosing between these two tools. Read our full research methodology.

pricing and plan value

Codex winsChatGPT Plus $20/mo, Pro $200/mo

Claude.ai $17/mo, Max $100-$200/mo

Both tools offer a free tier, a roughly $20/mo mid tier, and a $100-$200/mo high tier. On paper they look equivalent. In practice, they're not.

Codex runs on ChatGPT plans (Plus at $20/mo, Pro at $200/mo). The GPT-5.3-Codex model is significantly more token-efficient than Claude Sonnet, which means you get more actual coding work done per dollar. Most $20 Codex users rarely hit limits. Most $17/mo Claude users hit limits regularly, and even the $100/mo Max plan gets ceiling complaints from heavy users.

Codex Pro also bundles ChatGPT's image and video generation, the ChatGPT desktop app, and a more polished surrounding product. Claude's $20 plan includes Claude.ai access, but the desktop experience is comparatively basic. If you're evaluating pure coding value per dollar spent, Codex wins the $20 tier clearly. The $100+ comparison is closer now that Anthropic's Opus 4.6 is more efficient, but Codex still has the edge.

code quality and model performance

drawGPT-5.3-Codex, 4 reasoning levels

Opus 4.6 + Sonnet 4.6, 2 model choices

On SWE-bench Pro, Codex and Claude Code land in a similar range as of early 2026. On Terminal-Bench 2.0, Codex shows a noticeable lead on terminal-style tasks. Neither tool has a decisive overall edge on benchmark scores.

The subjective experience differs more than the numbers suggest. Codex tends to reason longer before outputting code, with fast visible token speed once it starts. Claude Code reasons less upfront and outputs slightly slower, especially on Opus. In practice, Codex's GPT-5.3-Codex model is 25% faster than its predecessor and supports real-time steering mid-task.

Codex also exposes granular reasoning controls: minimal, low, medium, and high settings. This matters because over-reasoning on simple tasks wastes time and tokens. Claude Code gives you a model switch between Sonnet and Opus but no reasoning depth dial. For users who want fine-grained control over the speed-quality tradeoff, Codex has the better tooling. For raw output quality on complex multi-file tasks, the two are genuinely comparable.

autonomy and agentic workflows

draw

Cloud sandbox, isolated containers, parallel tasks

Local terminal, real filesystem, Agent Teams

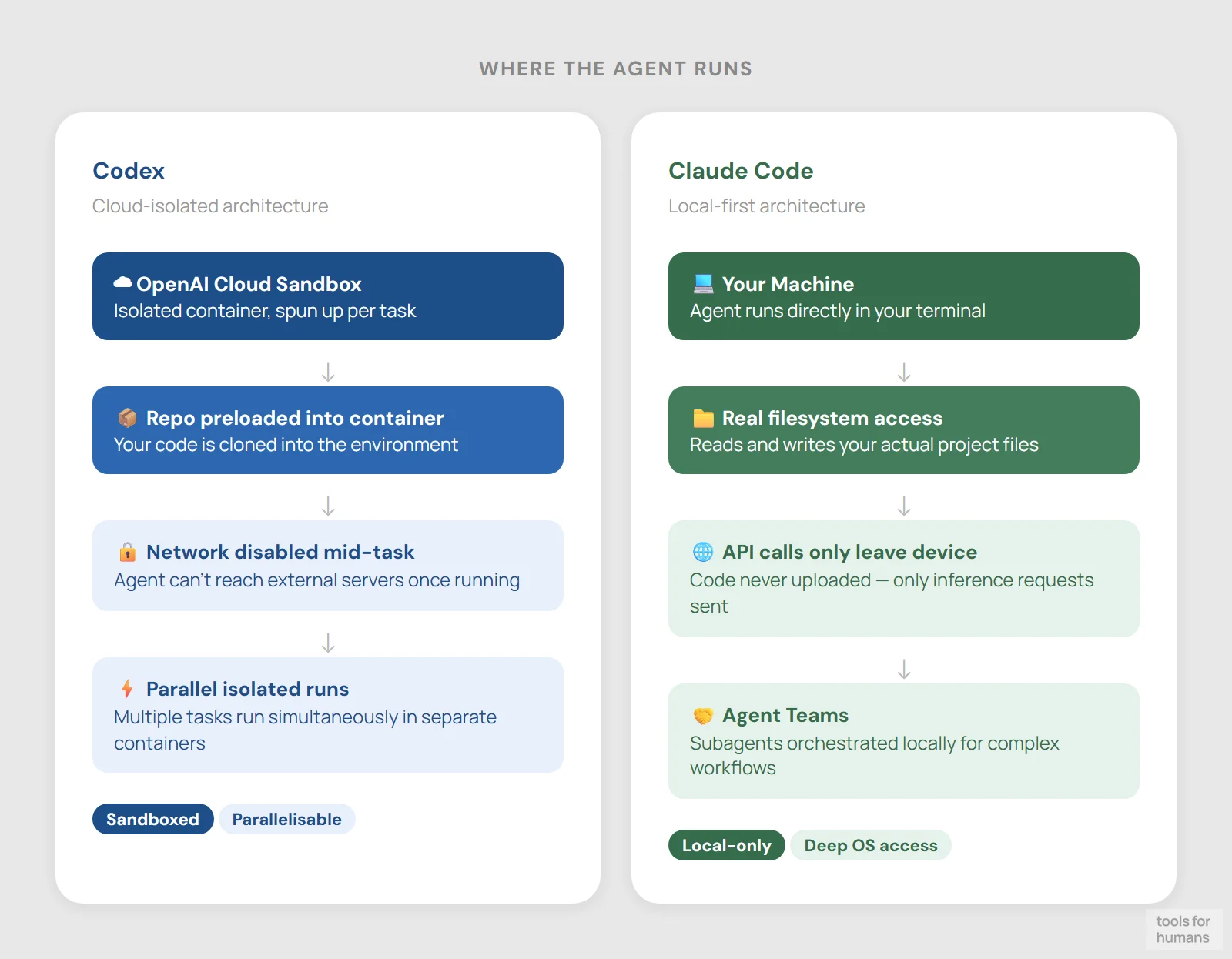

Both tools are full agentic systems, not autocomplete engines. The architectural difference is where the agent runs.

Codex cloud spins up an isolated container preloaded with your repository. Network access is disabled once the agent phase starts, preventing generated code from reaching external services. The agent completes the task and returns a pull request or diff. You can run multiple tasks in parallel across different repositories without tying up your local machine. Codex CLI also supports three autonomy modes: Suggest (propose only), Auto Edit (write files, ask before shell commands), and Full Auto (no interruptions).

Claude Code runs entirely in your local terminal against your actual filesystem. It uses your local git, executes real shell commands, and calls Anthropic's API only for inference. There's no sandboxed container. This means the agent can access anything on your machine, which is powerful but requires more trust in what you're running. Claude Code also gained Agent Teams in early 2026, allowing multiple sub-agents to coordinate on parallel tasks locally. Both tools support AGENTS.md configuration files, so existing project configs transfer across either tool.

editor and environment integration

Codex winsWeb, CLI, VS Code/Cursor, macOS app, GitHub

Terminal-native, VS Code/Cursor launcher only

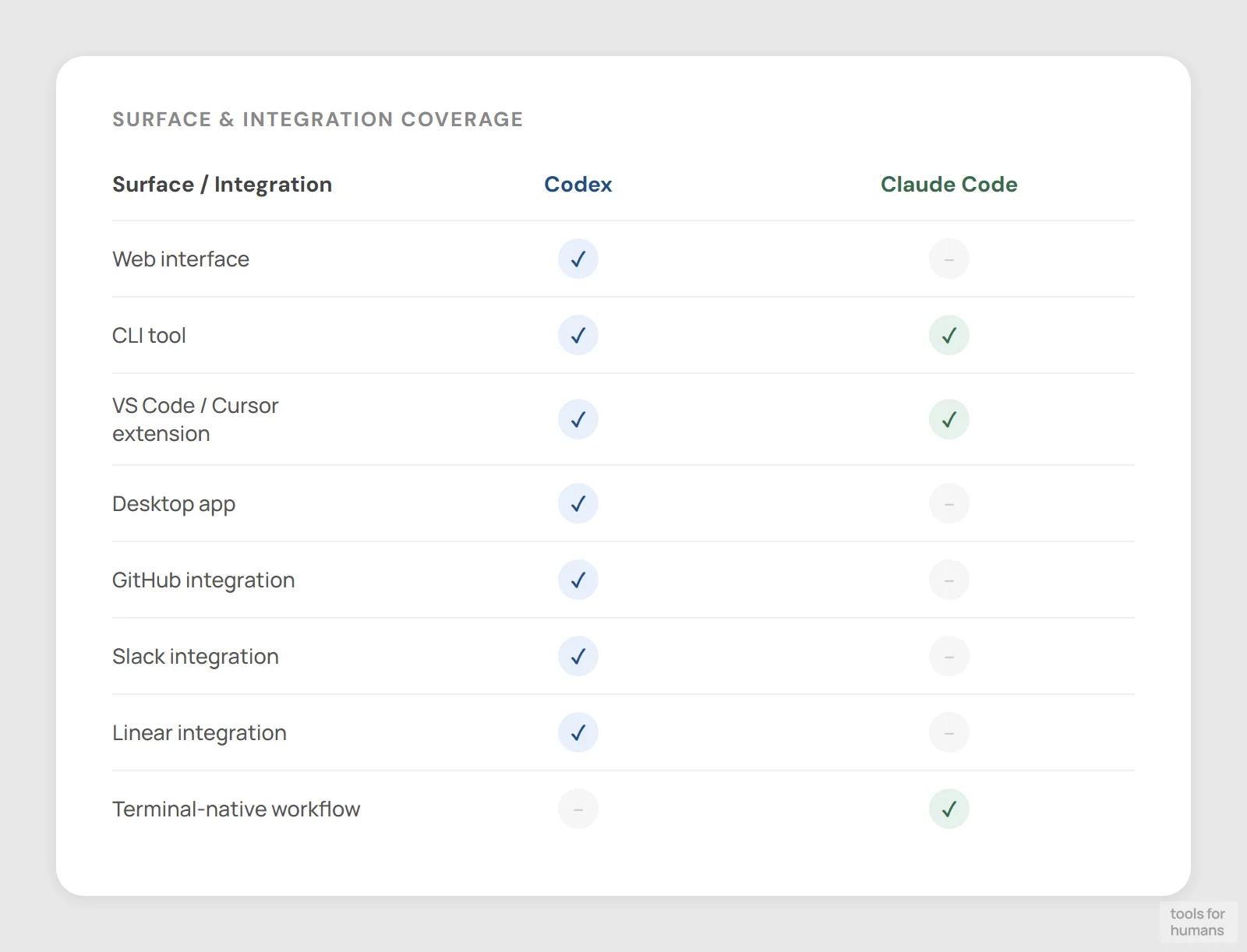

Codex integrates across four surfaces: the ChatGPT web agent at chatgpt.com/codex, an open-source CLI in Rust and TypeScript, VS Code and Cursor extensions, and a macOS desktop app launched February 2026. It also connects to GitHub, Slack, and Linear natively.

Claude Code is primarily a terminal tool. Its VS Code, Cursor, and Windsurf extensions exist but are essentially launchers, not deep IDE integrations. The real interface is the terminal UI, which supports @-tagging files, slash commands, and context control. You can run multiple Claude Code instances in parallel in different terminal panes as long as they're working on separate parts of the codebase.

For developers who live in an IDE and want native code review, inline diffs, and GitHub PR integration, Codex has the more complete surface area. For developers comfortable in the terminal who want a lightweight, portable tool without IDE dependencies, Claude Code fits the workflow better. Neither tool replaces a full IDE for editing, but Codex comes closer to covering that surface.

context window and file handling

Claude Code wins

Container-based repo access, context unspecified

1M token context window in beta (Sonnet/Opus 4.6)

Context window size directly affects how much of your codebase the agent can reason about in one pass. Claude Code currently has an edge here: Claude Sonnet 4.6 and Opus 4.6 support a 1 million token context window in beta, up from 200K previously. Anthropic has also leaked internal work on a 2 million token model, though nothing is publicly available yet.

Codex's GPT-5.3-Codex context window is not publicly specified in the same way. In practice, the cloud agent loads your repository into an isolated container, which handles large codebases through file access rather than stuffing everything into context at once. This works well for most projects but is architecturally different from Claude's brute-force large context approach.

For monorepos or projects where you need the model to hold thousands of files in working memory simultaneously, Claude Code's 1M token context gives it a real advantage. For typical feature-level or bug-fix tasks, neither tool runs into practical limits.

privacy and data handling

Claude Code wins

Cloud sandbox uploads repo to OpenAI infra

Local only, API calls inference only

This is where the two tools differ most structurally. Claude Code keeps your code on your machine. It reads your local filesystem, runs commands in your actual terminal, and only sends inference requests to Anthropic's API. Your code is never uploaded to a cloud environment to execute. For teams with strict data residency requirements or proprietary codebases, this is a meaningful distinction.

Codex cloud uploads your repository to an isolated container on OpenAI's infrastructure. The sandbox is network-disabled during agent execution, which limits some attack surface, but your source code does leave your machine. The Codex CLI can run locally, similar to Claude Code, and doesn't require the cloud sandbox. If privacy is the concern, the CLI is the right Codex surface to use.

Claude Code's local-first architecture is a genuine architectural privacy advantage. OpenAI's sandbox has good isolation properties, but the upload step is a non-starter for some enterprises and regulated industries.

MCP and third-party integrations

Claude Code winsGitHub, Slack, Linear native; AGENTS.md support

Broad MCP support, many one-click connectors

Model Context Protocol (MCP) support is increasingly important for agents that need to pull context from external tools like databases, documentation systems, or internal APIs. Claude Code has broader MCP support with many one-click connectors. Anthropic has invested in making MCP a first-class feature, and the ecosystem of pre-built integrations is larger.

Codex integrates natively with GitHub, Slack, and Linear, which covers the most common developer workflow touchpoints. It reads AGENTS.md files, which are supported across tens of thousands of open-source projects and adopted by tools including Cursor and Aider. But its MCP connector library is smaller than Claude Code's.

For teams already running MCP-heavy workflows or building pipelines that tap into custom data sources, Claude Code is the better fit. For teams whose integration needs are covered by GitHub, Slack, and Linear, Codex's native connectors are likely sufficient.

the verdict

Choose Codex if you want the most capable agent at the $20 price point, need parallel cloud task execution across multiple repositories, or want GitHub and Slack integration without extra setup.

Choose Claude Code if your code can't leave your machine, you're working on a massive monorepo where 1M token context matters, or your team is already invested in MCP-based tooling and external integrations.

Choose Codex if you're evaluating both for a typical product engineering team: the pricing headroom, faster model throughput, and broader IDE surface make it the more practical daily driver for most developers in 2026.

frequently asked questions

humans

toolsforhumans editorial team

Reader ratings and community feedback shape every score. Since 2022, ToolsForHumans has helped 600,000+ people find software that holds up after launch. The picks here come from that.

keep reading

claude code vs cursor

Claude Code vs Cursor: real comparison of pricing, autonomy, context windows, and workflows. Which AI coding tool fits your work style in 2026?

best Claude Code alternatives

7 Claude Code alternatives ranked by pricing, model flexibility, and workflow fit. Includes Cursor, Aider, and GitHub Copilot. For devs tired of usage limits.

best ChatGPT alternatives

7 ChatGPT alternatives including Claude and Perplexity, ranked by pricing, features, and use case fit. For writers, developers, and privacy-focused users.

best GitHub Copilot alternatives

7 GitHub Copilot alternatives compared, including Cursor, Tabnine, and Cody. Ranked by pricing, features, and ease of switching for developers in 2026.

best Replit alternatives

7 Replit alternatives compared, including Bolt and GitHub Codespaces, ranked by pricing, features, and real limitations. For developers and non-technical builders.

best Gemini alternatives

7 Gemini alternatives compared, including ChatGPT and Claude, ranked by pricing, features, and use case fit. For professionals switching from Google's AI assistant.