Claude vs Perplexity: AI assistant vs search engine (2026)

Editorial note: originally published in april of 2026

quick verdict

Claude is the better pick for most people doing extended writing, coding, or analysis work. Its reasoning depth and long-context handling are noticeably stronger than Perplexity's for tasks that don't require live web data.

If you need current information with cited sources — competitive research, news monitoring, or fact-checking recent events — Perplexity is the right tool. It's built around search, and that focus shows.

choose Claude if you want a capable AI assistant for writing, code, and deep analysis

visit claudechoose Perplexity if you need fast, sourced answers from the live web

visit perplexitypick your side

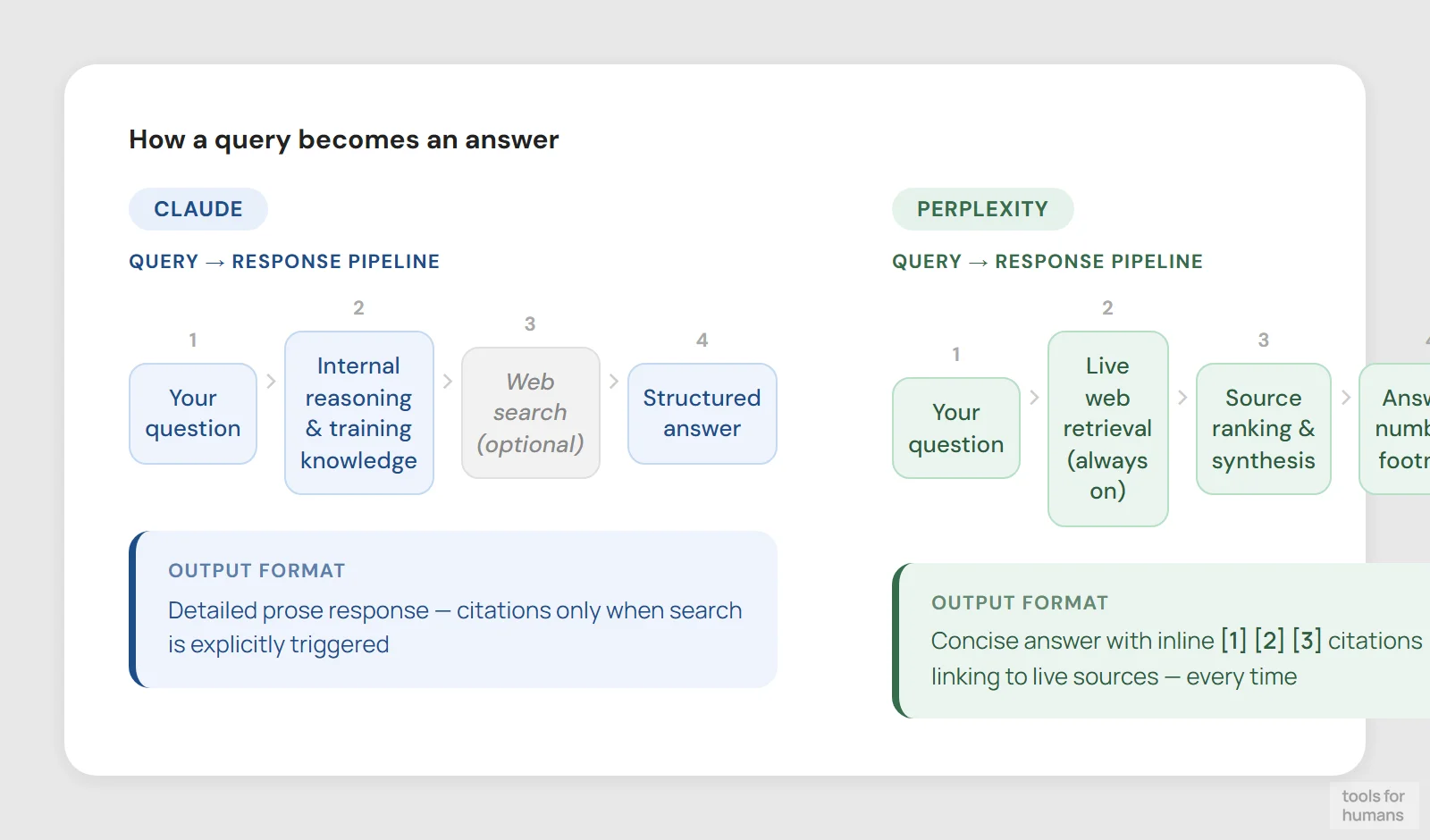

Claude (by Anthropic) and Perplexity are both AI tools, but they're built around different problems. Claude is a general-purpose AI assistant optimized for reasoning, writing, and long-form analysis. Perplexity is an AI-powered search engine that returns real-time answers with numbered citations to source pages.

They share a price point ($20/month for the paid tier) and a conversational interface, but the overlap mostly stops there. Choosing between them depends less on which is technically superior and more on what kind of work you're actually doing.

This comparison covers pricing, core capabilities, context handling, real-time search, writing quality, and the specific use cases where each tool has a clear advantage.

feature comparison

We collect first-hand reviews from people who use these tools every day — what works, what doesn't, whether it's worth paying for. We research pricing, features, and comparisons so that feedback has real context behind it. For this comparison, we prioritized tasks that reflect real professional use: sustained writing sessions, research with source verification, coding workflows, and multi-turn document analysis. Read our full research methodology.

real-time search and citations

Perplexity winsWeb search available, not citation-first

Every answer cites numbered live sources

This is the sharpest difference between the two tools. Perplexity is built around web retrieval. Every answer pulls from live sources and includes numbered footnotes linking directly to the original pages. If you ask about last week's earnings report, a recent regulatory change, or today's news, Perplexity gives you a usable answer in seconds with verifiable sources.

Claude has web search on all plans including the free tier, but it's an added capability rather than the core product. Its training knowledge is broad and its reasoning on that knowledge is strong, but it wasn't architected for continuous source citation the way Perplexity was. In practice, Perplexity's sourcing workflow feels native; Claude's feels bolted on.

For anyone whose daily work involves monitoring current events, verifying recent statistics, or doing literature discovery, Perplexity's citation-first design is a real advantage. The sources are right there, clickable, and you can audit the answer immediately without a separate fact-check step.

writing and reasoning quality

Claude wins9.5/10 conversation quality on G2

Concise, search-optimized responses

Claude produces noticeably better long-form writing than Perplexity. Its outputs read like they were written by someone who understands structure, tone, and argument — not just someone who retrieved the right facts. For drafting reports, editing contracts, writing code with explanations, or working through a complex analytical problem, Claude is the stronger tool.

Perplexity's writing quality is adequate for search-style answers but it isn't trying to compete here. Its responses tend to be concise and structured around what you asked rather than developing an argument or exploring nuance. That's appropriate for a search engine but limiting if you need more than a summary.

Claude's Constitutional AI approach also shows up in the quality of its reasoning. It tends to flag uncertainty rather than fill gaps with confident-sounding guesses. On G2, users rate Claude's natural conversation quality at 9.5 versus Perplexity's 8.6. That gap reflects a real difference in how each tool handles ambiguity, follow-up questions, and multi-step reasoning.

pricing and free tier

drawFree tier, $20/mo Pro, $100/mo Max

Free tier, $20/mo Pro, $10/mo Education

Both tools charge $20/month for their individual paid plans. The free tiers differ more than the paid ones. Claude's free tier gives you access to Claude Sonnet with web search, image analysis, file creation, and code execution — it's a capable tool on its own. The main limit is daily message caps (roughly 30-100 messages depending on length and model load).

Perplexity's free tier is generous for basic searches but caps Pro Searches at 5 per day and restricts file uploads to 3 per day at 5MB max. Pro Search is where Perplexity accesses advanced models and does deeper reasoning, so hitting that cap quickly frustrates heavier users.

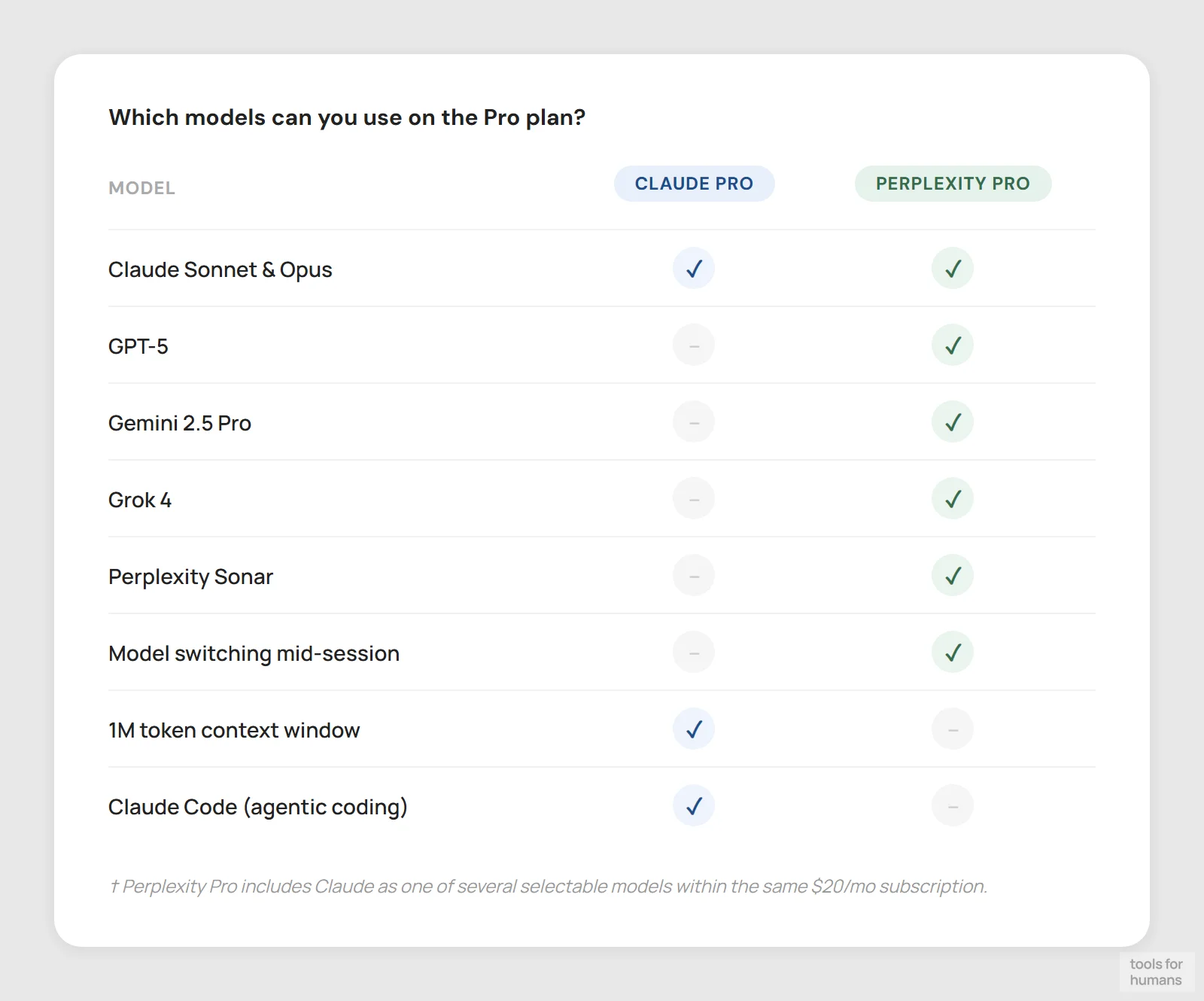

At the $20/month Pro level, Claude includes Claude Code and Claude Cowork, plus access to multiple models and projects for organizing conversations. Perplexity Pro gives unlimited Pro searches, access to third-party models like GPT-4 and Gemini, image generation, and more file upload capacity. Claude also offers a Max plan ($100/month) for heavier usage, and a Team plan starting at $25/seat/month. Perplexity has an Education Pro plan at $10/month for verified students.

context window and memory

Claude winsUp to 1M token context window

Session context, 50 files per space (Pro)

Claude handles up to 1 million tokens in a single conversation, with context compaction that summarizes older history to keep the session going. This makes it practical for working with large codebases, lengthy legal documents, or long research threads where earlier context needs to stay relevant. In testing, Claude handles references to content from 10+ messages back more reliably than Perplexity.

Perplexity maintains session context within a thread and Pro users can save spaces with up to 50 file uploads, but its architecture is optimized for query-response cycles rather than sustained multi-turn reasoning. It's more likely to treat each question somewhat independently, which works fine for research tasks but limits its usefulness for iterative, document-heavy work.

G2 user ratings back this up: Claude scores 8.7 in context management versus 7.9 for Perplexity. For anything involving a large document you want to interrogate across multiple questions, or a long coding session where the assistant needs to remember earlier decisions, Claude is the more reliable tool.

AI models and flexibility

Perplexity winsAnthropic models only (Sonnet, Opus)

GPT-5, Gemini, Grok, Claude, Sonar

Perplexity's model selection is one of its underappreciated strengths. The Pro plan gives access to GPT-5, Claude 4, Gemini 2.5 Pro, Grok 4, and Perplexity's own Sonar models within a single subscription. If you want to compare answers across providers or use the best model for a specific task type without managing multiple subscriptions, that's a real practical advantage.

Claude, naturally, only gives you access to Anthropic's own models: primarily Sonnet and Opus variants, with different capability and cost profiles. The Pro plan expands model access beyond the default, but you're staying within Anthropic's ecosystem. This isn't a flaw — the models are strong — but if you rely on GPT or Gemini for specific workflows, Perplexity lets you access them in a search context without a separate OpenAI or Google subscription.

For users who specifically want Claude's reasoning and writing quality, this comparison is moot. But for users who want model flexibility plus search, Perplexity's multi-model setup is worth noting.

coding and developer tools

Claude winsClaude Code included in Pro plan

No code execution or agentic coding

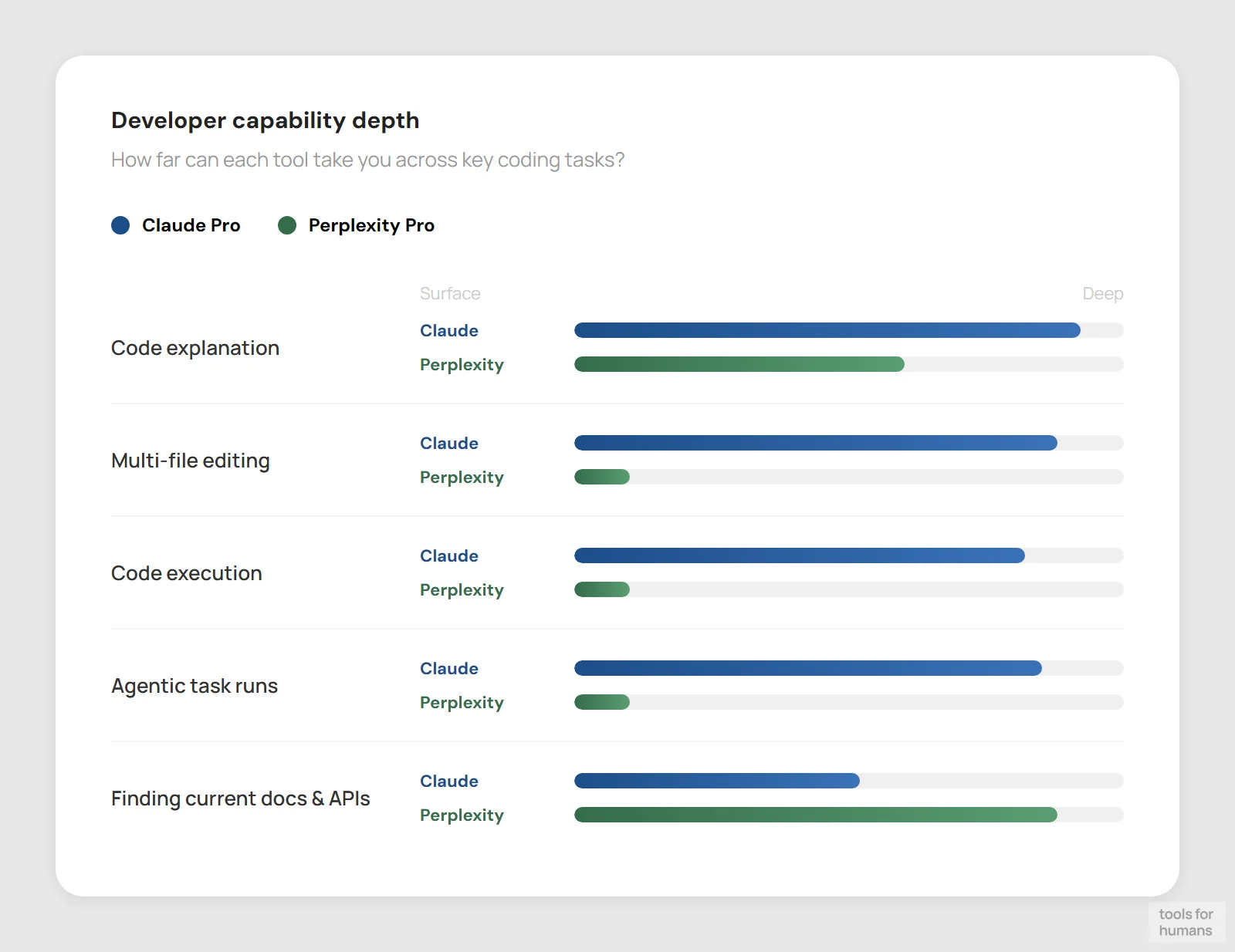

Claude has invested heavily in developer tooling. The Pro plan includes Claude Code, a terminal-based agentic coding tool that can work across files, run commands, and handle multi-step programming tasks with minimal supervision. Claude Cowork is also included, aimed at collaborative workflows. For developers who want to use AI for more than autocomplete — architecture decisions, debugging across a codebase, writing tests — Claude's depth here is genuine.

Perplexity isn't a coding tool. You can ask it programming questions and get sourced answers, which is useful for looking up library docs or finding recent Stack Overflow threads, but it doesn't have an integrated code execution environment or the ability to work iteratively through a complex engineering task. Its utility for developers is closer to a smarter documentation search than a coding assistant.

If coding is part of your workflow, Claude is the clear choice. Perplexity's strength for developers is in research: finding recent package releases, checking compatibility notes, or reading through API documentation quickly.

integrations and ecosystem

Claude winsSlack, Google Workspace, MS365, MCP

Browser extension, no deep integrations

Claude connects to Slack, Google Workspace, and Microsoft 365 (on Team and Enterprise plans). It supports remote MCP connectors, allowing custom integrations with external tools and data sources. For teams that want to bring Claude into an existing workflow rather than switching tabs, this matters. The Enterprise plan adds organization-wide search across connected services.

Perplexity is largely self-contained. There's a browser extension for quick queries and the app works across web and mobile, but it doesn't integrate deeply with other tools. You go to Perplexity, get your answer, and take it elsewhere. That simplicity works fine for individual research tasks but creates friction for teams trying to build AI into a repeatable workflow.

For solo users doing one-off research, the integration gap doesn't matter much. For teams or anyone who wants AI embedded in where they already work, Claude's platform approach — with connectors and workspace integrations — is a meaningful differentiator.

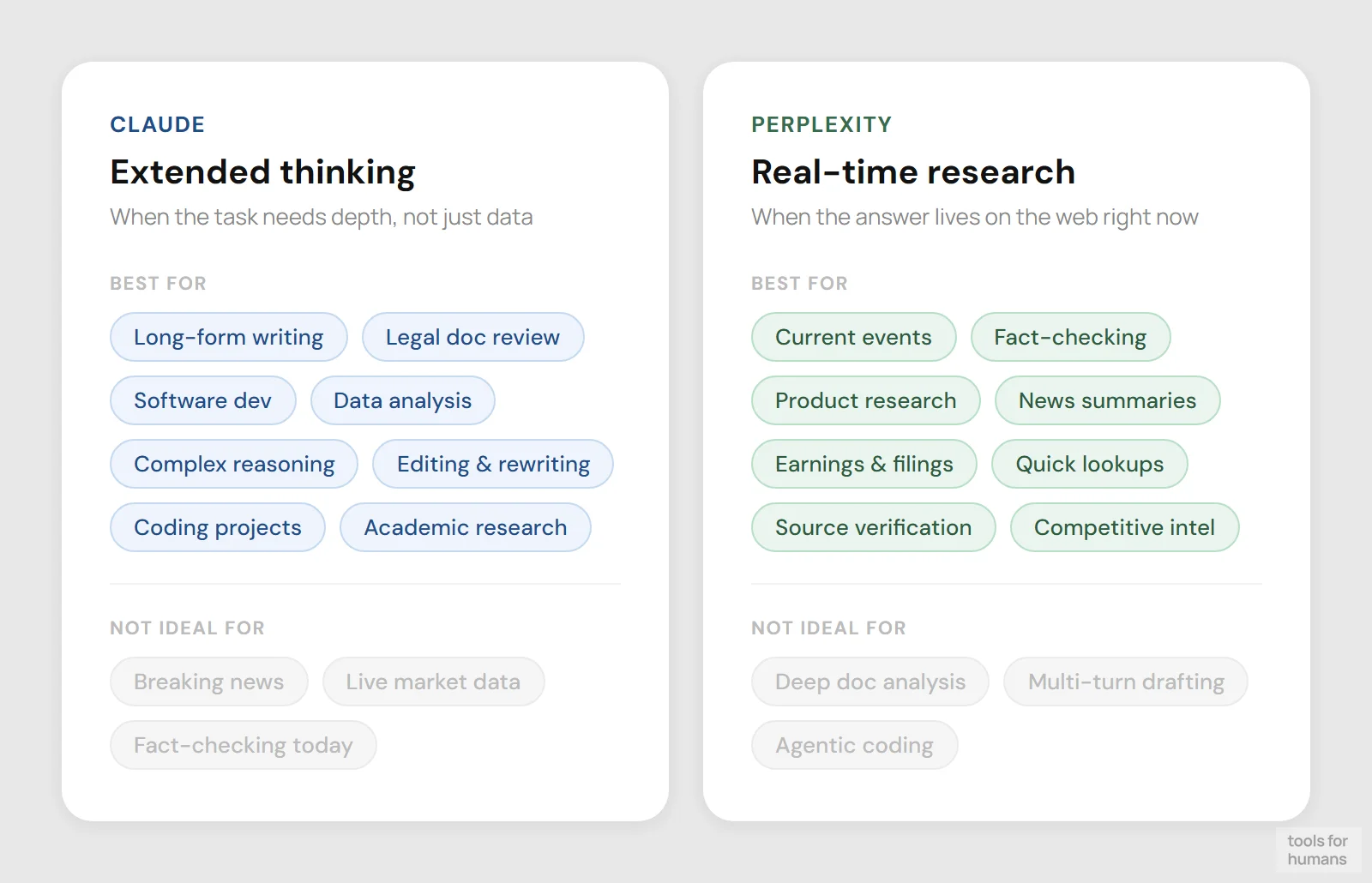

use case fit

draw

Writing, code, analysis, document work

Research, fact-checking, current events

Claude fits best when the task requires sustained reasoning, careful writing, or working through a complex problem over multiple turns. Legal document review, software development, long-form drafting, data analysis, and academic research that doesn't depend on cutting-edge sources are all strong fits. The ethical guardrails from Anthropic's Constitutional AI approach make it more appropriate for regulated or sensitive environments.

Perplexity fits best when you need a fast, sourced answer to a factual question. Competitive intelligence, news monitoring, fact-checking, and literature discovery all play to its strengths. It's also a strong choice for anyone who wants access to multiple frontier AI models without managing separate subscriptions.

The two tools aren't interchangeable but they also aren't mutually exclusive. Some users keep both: Perplexity for research and fact-gathering, Claude for drafting and analysis. At $20/month each, that's a $40/month stack, which is reasonable if both workflows are present in your daily work.

the verdict

Choose Claude if your work involves writing, coding, or working through complex problems where reasoning quality and long-context handling matter. It's also the right call for teams who want AI integrated into Slack, Google Workspace, or Microsoft 365, and for developers who want agentic coding capabilities without a separate tool.

Choose Perplexity if your primary need is fast, cited answers from the live web. Competitive research, news tracking, fact-checking, and any task where you need to verify sources quickly are where it earns its subscription fee. The multi-model access on Pro is also worth noting if you want GPT-5 or Gemini without separate subscriptions.

Choose Perplexity if you just need a smarter search engine. Choose Claude if you need an AI assistant that can actually do extended thinking work. For most professionals who write, code, or analyze documents regularly, Claude is the more capable daily driver.

frequently asked questions

humans

toolsforhumans editorial team

Reader ratings and community feedback shape every score. Since 2022, ToolsForHumans has helped 600,000+ people find software that holds up after launch. The picks here come from that.

keep reading

best ChatGPT alternatives

7 ChatGPT alternatives including Claude and Perplexity, ranked by pricing, features, and use case fit. For writers, developers, and privacy-focused users.

best Gemini alternatives

7 Gemini alternatives compared, including ChatGPT and Claude, ranked by pricing, features, and use case fit. For professionals switching from Google's AI assistant.

claude vs chatgpt

Claude vs ChatGPT: honest side-by-side comparison of pricing, writing quality, code help, context windows, and which tool fits your actual workflow.

chatgpt vs gemini

ChatGPT vs Gemini compared on pricing, writing quality, coding, Google integration, and more. Find out which AI chatbot is right for your workflow in 2026.

claude vs gemini

Claude vs Gemini compared on writing, coding, pricing, and integrations. Find out which AI assistant fits your workflow in 2026.

best ai tools for business: top picks for teams

8 AI business tools compared: ChatGPT, Zapier, Notion AI, and 5 more, ranked by use case fit, pricing, and real-world utility for small and mid-size teams.